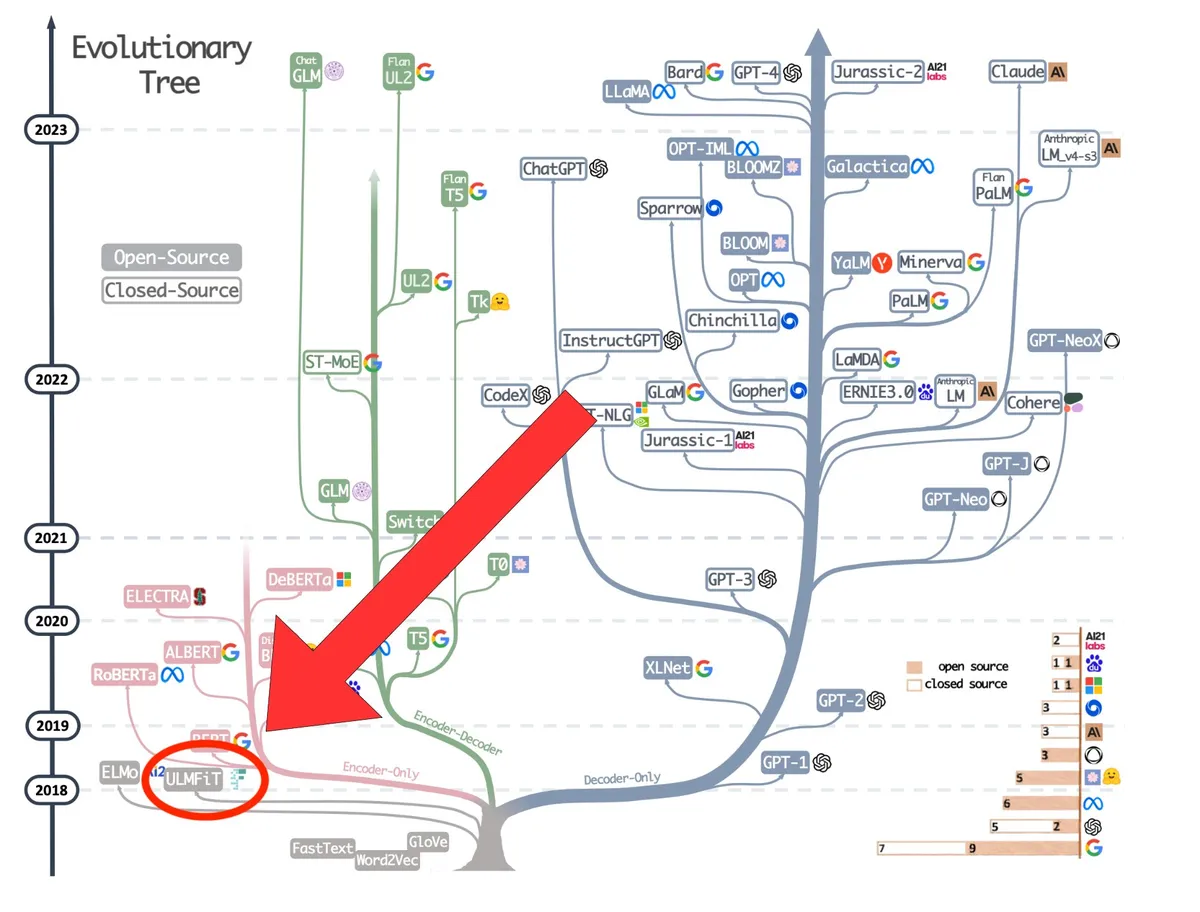

LLM Open Source History

Welcome everyone! Let's talk about the story that many forgot: LLM open source history

The Genesis: ULMFIT (2018)

First, let's set the record straight. The first LLM was created by @jeremyphoward & @seb_ruder.

It was called: ULMFIT (2018)

Why? Everything is in the name:

- U: Universal

- LM: Language Model

- FiT: Fine tuned

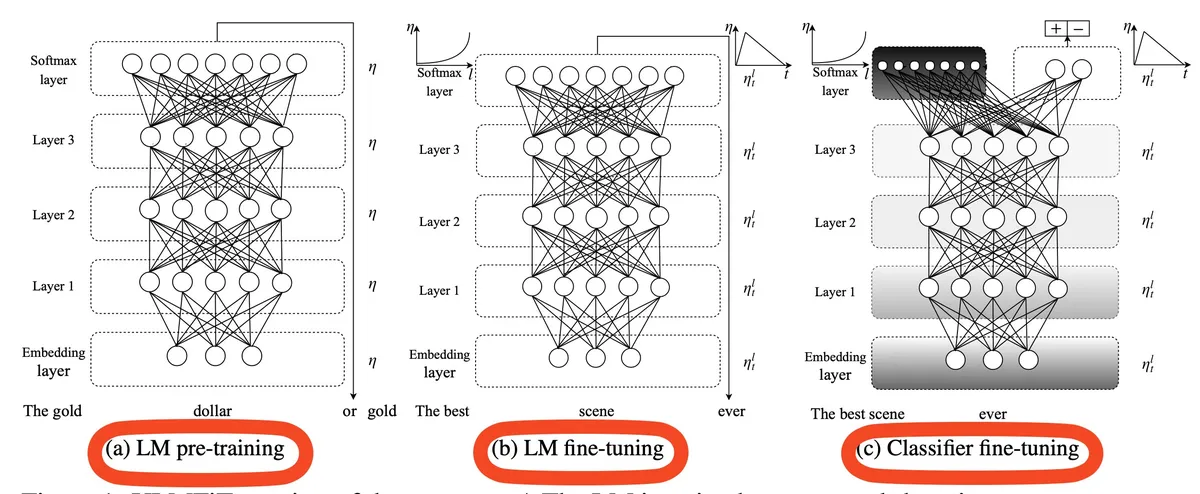

It was the first model that was trained on a large corpus (pretraining), and then would be finetuned for applications. Figure 1 speaks for itself 😉

Open source 10/10: model weights & code.

The key difference with current LLMs: it was an LSTM unlike what's next...

The Transformer Revolution: GPT-1 & 2 (2019)

Radford demonstrated the effectiveness of the transformer and made it the go-to architecture for NLP.

He popularized the decoder-only architecture and was truly pivotal for the field.

GPT-2 was scale up param and data, this was the beginning of 0-shot learning.

The Turning Point: GPT-3 (2020)

The closed-source era began: GPT-3 was at first not accessible for everyone, you had to be whitelisted. And then only through a paid API. This was a first.

An open research lab would not publish a model and would make other labs pay for it. It set a precedent.

But most importantly not only the model weights were closed but the code, the data, everything was closed.

Academia started to complain and started to question OpenAI commitment to its own charter's. hmm hmm.

This was the end of the fully open source research era in AI.

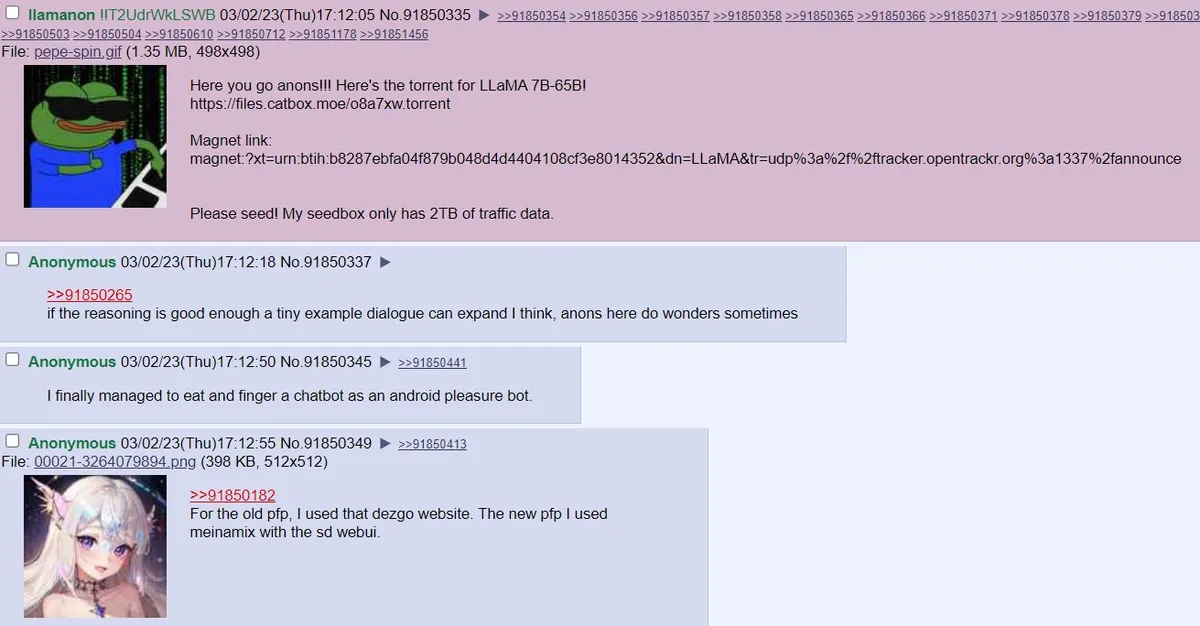

Llama's Leak

As ChatGPT became extremely popular in Nov 2022 [Corrected from OCR'd Nov 2023], it seemed that open source was dead.

But then something happened, Llama (the LLM trained by Meta) got leaked on 4chan. And instead of being mad, Meta saw it as an opportunity and embraced it.

They released: Llama and Llama 2.

A few employees saw it as an opportunity and founded Mistral.

The plan was: build European LLMs for the Europe.

Open source as a contender strategy: Mistral (2023)

How to face the big labs that have: all the money, the GPU, and the users?

-> Open source

As Arthur Mensch explains: open source is a great strategy for challengers.

And the execution was something:

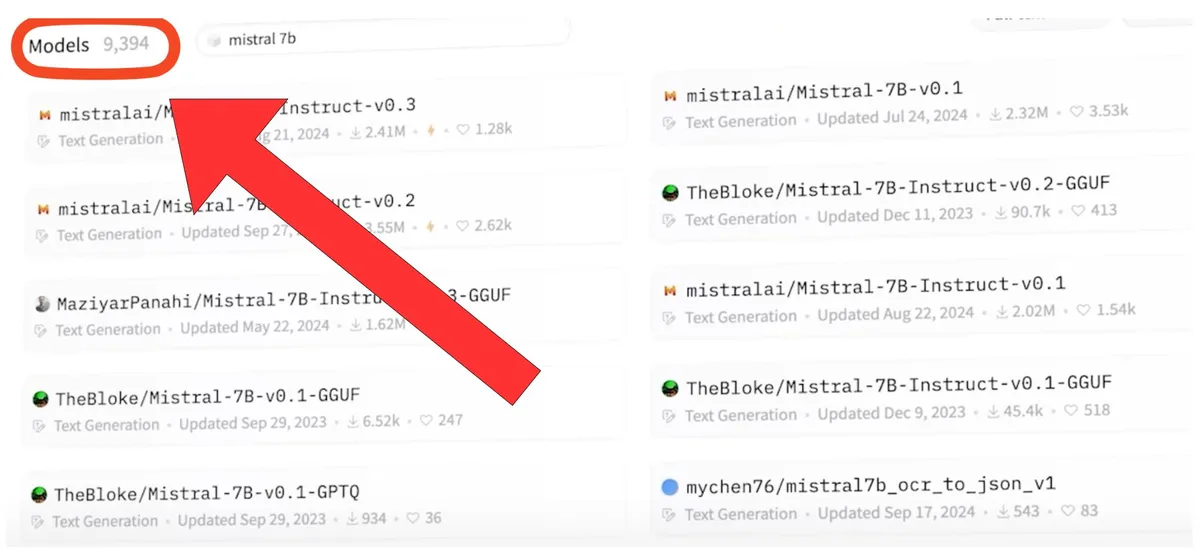

Guillaume Lample had the idea to directly drop the magnet link. Arthur Mensch posted it at 5am and it blew up... this was straight up insane.

No marketing, no nothing.

Just model weights, downloadable by everyone, this was freedom.

The community finetuned for all sorts of use cases. Including talking to dead people by @teknium.

In total this is about 10k models that were finetuned and released on Hugging Face 🤗.

This was no accident: the model size was a very well chose: runnable and finetunable on a gaming pc.

The Finetuning Wave: LLMs for Everyone (2023)

While Llama 2 would give okayish results with finetune, Mistral was actually working.

+ finetuning would divide your cost by 50x

The goal: finetune many models and orchestrate them.

That's what we did with Alessandro Duico at my previous startup AthenAI.

Open Source is all you need? Llama 3 (Apr 2024)

@joespeez introduced both Llama 3 series and the 405B model at Weights & Biases event in SF. This was big.

We called it: gpt-4 at home. GPT-4 at the time was the only model able to perform decent coding and science.

Open source was catching up fast!

Pushing the Boundaries: DeepSeek (2024/2025)

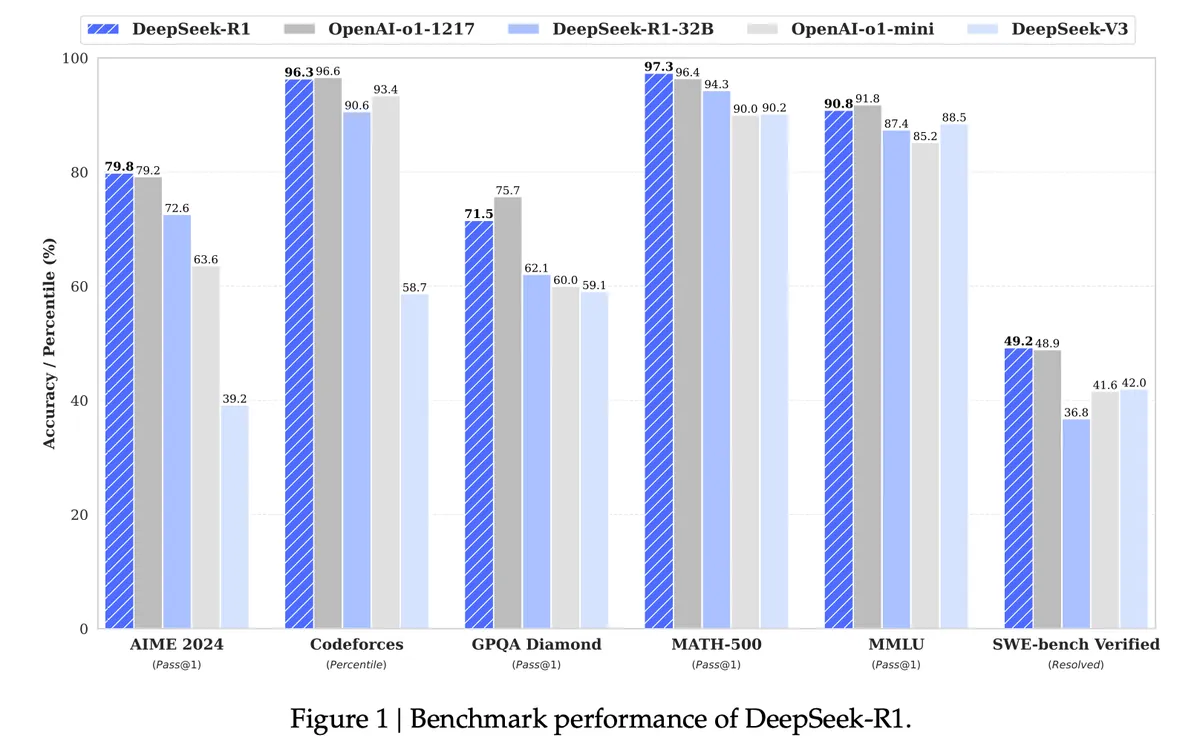

Okay, that was a tsunami. DeepSeek published 1 paper per month over a year and evolved the transformer with: Multi head latent attention

It allowed to run models for 1/30th of the price as well as reducing training costs considerably.

OS is back to the top.

Open Source Becomes the Norm?

In parallel since Mistral drop, every other lab outside of OpenAI has been open sourcing models: Google, Meta, Alibaba, Nvidia.

Funny that the one company that include open in its name didn't release any open source model in 2 years.

But it seems that it is about to change.

A Personal Reflection on AI Safety

As someone that was part of AI safety group back in Delft with @JanWehner436164 I was first afraid to open source such a tech. I thought that bad intended individuals (terrorists, scammers, and such) would use to harm society.

But then I realized, that it was far more dangerous that only a few control what's in our LLM that we use in our everyday life. This was 1984.

Remember when Mistral raised 113M$, the team's claim was: there are less than 100 people that can train LLM right now...

Join the Conversation

Btw, this is just my workblog, I share my reflections and questions.

I'll post every Sunday on my biggest learnings of the week.

Feel free to just learn with me :)

If you've read this far you're an open source friend

Q: If the gap between OS and Closed is close, are you now using open source models?

If not what was the first one you started finetuning with?